In the real world of computer vision, the ground truth is constantly shifting. Designs change frequently over several different vehicle makes, states issue new license plate variants, and many other entirely new edge cases emerge daily. To keep our systems highly accurate, Machine Learning at Flock must iterate rapidly—whether that means retraining on new plate designs, applying quick fixes to production models, or experimenting with entirely new state-of-the-art architectures.

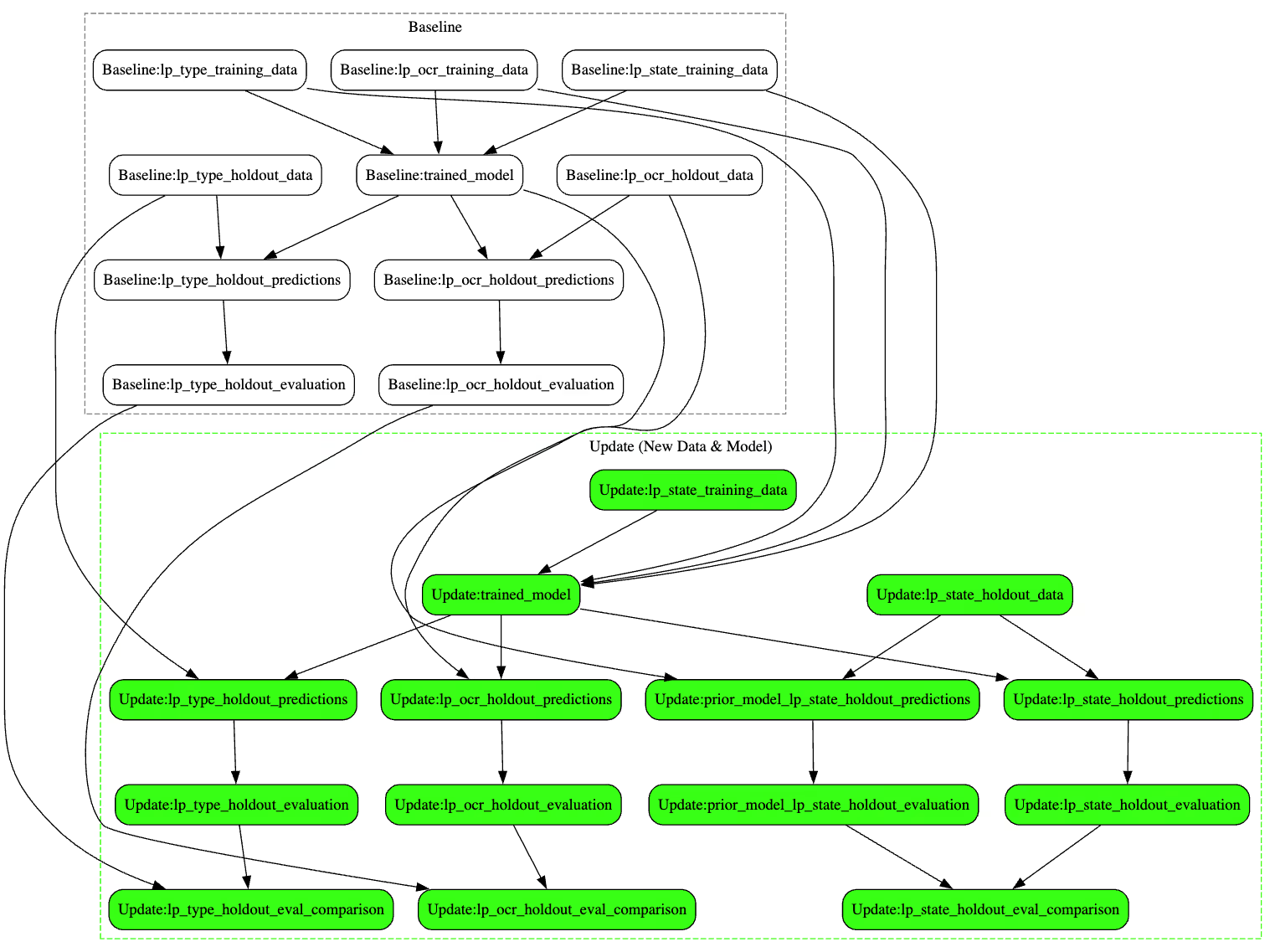

Developing and updating these robust models is rarely a single, monolithic step. To deliver a production-ready model, our ML engineers have to orchestrate a complex set of dependent tasks. We build training and evaluation datasets, train models, generate predictions against holdout sets, summarize evaluation results into metrics, and meticulously compare the new candidates against our production baselines.

When you multiply this process across dozens of model iterations, new data additions, and hyperparameter tweaks, the complexity of managing these tasks skyrockets.

For a long time, we relied on Kubeflow Pipelines to manage this complexity. However, driven by our need to continuously improve and iterate more rapidly, we started feeling the friction of our existing tooling. Here is the story of why we outgrew our traditional ML pipeline tools, and how we built traintrack, our Bazel-inspired ML orchestrator.

The Pains of Traditional ML Pipelines

Kubeflow Pipelines gave us a solid foundation. It provided versioned, container-based execution environments, backed our artifacts to Amazon S3, and allowed us to scale compute massively to run parallel tasks.

.avif)

However, as we pushed to iterate faster, we ran into three systemic friction points that slowed our developer velocity:

- Disconnected Lineage: In Kubeflow, pipeline results couldn't be directly linked as dependencies to tasks in other pipelines. If Pipeline B depended on a model trained in Pipeline A, an engineer had to manually track down the S3 object path from Pipeline A and copy-paste it as an input to Pipeline B. This was cumbersome, manual, and prevented any visibility into the true end-to-end lineage of our work.

- The Local vs. Remote Divide: Operating Kubeflow felt vastly different from local development. We had to maintain separate configurations and scripts for local testing versus remote execution. We found ourselves writing tests just to ensure these separate configurations stayed aligned, which felt like purely overhead work.

- UI-Driven Configuration Drift: Updating configurations in the Kubeflow UI was error-prone. Finding historical work was difficult, and the lack of native Git integration for pipeline states meant reproducibility was often dependent on clicking the right buttons in a web interface rather than committing code.

We realized we didn't just need another "pipeline runner." To solve these systemic issues, we looked outside the traditional ML ecosystem and drew heavy inspiration from mature software engineering build tools. Heavily influenced by the principles laid out in the book Software Engineering at Google, we recognized that our ML pipelines were essentially complex compilation processes. By realizing that every step acts as a target in a massive dependency graph, we found a new way forward.

Introducing Traintrack

traintrack is a Python package we built internally to manage, track, and execute ML modeling tasks. It shifts our paradigm from "running versioned pipelines" to "executing a dependency lineage graph."

Instead of treating ML tasks as isolated workflows, traintrack borrows heavily from software engineering build systems—specifically Bazel—to treat ML tasks as a massive, cacheable Directed Acyclic Graph (DAG).

Here are the core principles driving traintrack's architecture:

1. Connecting Work Over Time

One of our biggest realizations was that ML development isn't a series of isolated pipelines; it's a continuously evolving graph of work.

In the past, if a new license plate design was released, we would have to spin up an entirely new, isolated pipeline to retrain and evaluate the model. With traintrack, tasks are represented as a global graph of connected work able to be updated through time. If we add new training data, we simply add a new node to our existing graph. Traintrack understands the lineage and only executes the specific downstream tasks (training, evaluation, comparison) that depend on that new data, while instantly pulling the cached results for everything else.

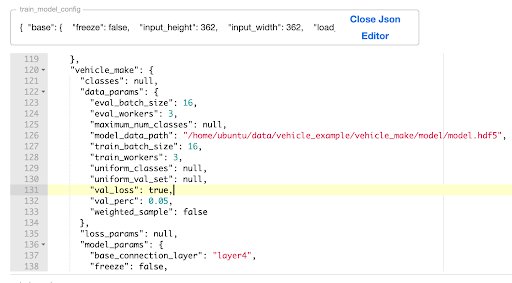

2. Configuration as Code (Git > UI)

All modeling work is defined in configuration YAML files. By moving away from UI-based parameters, our entire ML experimentation state is version-controlled via Git. Copying, editing, comparing, and searching configurations is now done via standard text editors and diff tools. This makes peer-reviewing ML experiments identical to reviewing software pull requests.

3. Bazel-Inspired Architecture

To organize our ML tasks, we adopted Bazel’s concepts of Workspaces, Packages (which we call Experiments), and Targets:

- Workspace: The root directory of the project, containing a TTWORKSPACE.yaml file that sets up project-wide settings.

- Experiment: A logical grouping of work (e.g., all license plate model tasks) housed in a directory containing a TTCONFIG.yaml file.

- Target: Individual tasks defined within an Experiment. Targets specify the Docker image to use, the commit hash of the code, and the arguments needed.

Crucially, Targets can directly specify other Targets as dependencies. Traintrack detects dependencies in the YAML arguments. If a target depends on an artifact in the same experiment, we use the syntax ":trained_model". If it depends on an artifact from another experiment, we use the Bazel-style path "//license_plate/experiment:lp_type_holdout_data".

task: "compare_models"

commit: "9b12726ba3825d72ccdba5bd93505873a32eb769"

args:

old_model_artifact: "//runs/license_plate/2026-04-16_data_update:exported_model"

new_model_artifact: ":exported_model"

labeled_data_prediction_comparisons:

"LP OCR Holdout":

task_name: license_plate_ocr

old_predictions_artifact: ":old_lp_ocr_holdout_predictions"

new_predictions_artifact: ":lp_ocr_holdout_predictions"

"LP State Holdout":

task_name: license_plate_state

old_predictions_artifact: ":old_lp_state_holdout_predictions"

new_predictions_artifact: ":lp_state_holdout_predictions"

…

4. A Strict Docker Contract

Traintrack doesn't care what language or framework a task uses, as long as it adheres to a strict Docker execution contract. Every task must be callable via the command line like this:

$ docker [IMAGE] run [TASK_COMMAND] [CONFIG_YAML] [OUTPUT_DIRECTORY]

When a task declares a dependency on a prior task, traintrack automatically wires the prior task's OUTPUT_DIRECTORY as the input value for the new task. This setup forms the building blocks to dynamically construct any DAG of work.

5. Deterministic Caching

Every task and artifact in traintrack gets a unique ID generated by hashing the task configuration and its parameters. Because the hash incorporates the hashes of all upstream dependencies, we guarantee absolute reproducibility.

Because this hash is directly tied to the task, it becomes incredibly easy to locate and reuse prior work. Engineers can use built-in CLI commands like "traintrack hash" and "traintrack pull" to instantly find and retrieve the underlying artifacts using just the target path and name. Instead of digging through S3 buckets or UI menus, the exact output of any historical experiment is always just a quick command away.

Beyond just historical retrieval, this shared cache acts as a collaborative synchronization point. Because we treat our work as a globally cached graph, S3 caching is smart enough to handle concurrent executions gracefully. If two different engineers trigger a run that depends on the exact same upstream target, and that target is currently processing, the system detects the overlapping request. Instead of spinning up redundant compute jobs, the second engineer's pipeline will simply pause, wait for the first engineer's task to finish, and then instantly build on top of that newly cached artifact.

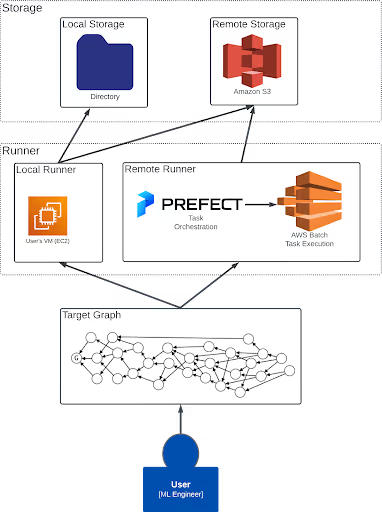

Seamless Execution: From Local to Remote

Perhaps the biggest developer experience win with Traintrack is the unification of local and remote execution. With Kubeflow, testing a pipeline locally before deploying it to the cloud required disjointed tools and mental context switching.

With traintrack, there is zero difference between local development and production execution. The exact same configuration files, target definitions, and commands are used for both. The only difference is a single command-line flag: the --env parameter.

To run an experiment, an engineer types:

$ traintrack run --env [run_env] //path/to:target

Traintrack compiles all dependent tasks into a Target Graph and sends that work to a Runner, saving results to Storage. The [run_env] flag completely controls what Runner and Storage are used:

- Local Run (--env local): Sends work to a Local Runner (typically the user's EC2 VM) and saves artifacts to a local directory. This is perfect for rapid debugging, dry-runs, and ensuring the Docker container behaves correctly without waiting for cloud compute provisioning.

- Remote Run (--env remote): Sends the exact same target graph to our Remote Runner and saves artifacts to Amazon S3.

Replacing Kubernetes with Prefect and AWS Batch

Moving to this architecture allowed us to fundamentally rethink our underlying infrastructure. Kubeflow required us to maintain a heavy, complex Kubernetes cluster—a significant operational burden for our platform team.

Because Traintrack simply needs to execute containerized tasks in a graph, we replaced the Kubernetes cluster entirely. Our remote execution is now managed via a lightweight Prefect orchestration layer backed by AWS Batch for task execution, which is all created via an internal Terraform module.

This architecture creates a powerful separation of concerns: the remote execution environment holds absolutely no logic or data about the experiments or the project. It acts simply as a scalable execution engine. All critical information and state that needs to be persisted about the work is cleanly stored in Git (for configuration) or S3 (for artifacts).

Furthermore, because this entire remote backend can be stood up with a single Terraform module, each ML team or project can own and manage its own unique remote compute environment. This is a massive organizational win, as it eliminates the heavy coordination required when managing everyone's workloads on a single central cluster. If a team needs to make an infrastructure update, they can perform easy blue-green deployments of their own compute environment without risking disruption to another team. It scales down to zero when not in use, requires a fraction of the operational support, and still gives us massive parallel compute capabilities exactly when we need them.

module "remote_execution" {

source = "git@github.com:flock/traintrack.git//modules/remote-execution?ref=3.1.4"

remote_execution_name = "computer-vision"

efs_data_file_system_id = "fs-AAABBBCCCXXXYYYZZZ"

instance_type = "g5.xlarge"

ami_id = "ami-AAABBBCCCXXXYYYZZZ"

}

output "batch_execution_role_arn" {

value = module.remote_execution.batch_execution_role_arn

}

output "aws_batch_queue_arn" {

value = module.remote_execution.aws_batch_queue_arn

}

output "prefect_server_url" {

value = module.remote_execution.prefect_server_url

}

output "batch_aws_cloudwatch_log_group_name" {

value = module.remote_execution.batch_aws_cloudwatch_log_group_name

}Looking Ahead

Treating ML operations more like software build systems has dramatically improved developer velocity at Flock, in some cases 10x’ing our ability to make model updates. Engineers no longer waste time hunting down S3 URIs or battling UI configurations. They declare what they want to build, declare what it depends on, and let traintrack handle the graph execution, caching, and storage.

As we look to the future, we are continuing to evolve Traintrack's capabilities. First, we are focused on improving our monitoring and observability integrations, aiming to provide the rich visualizations and tracking that engineers expect from tools like TensorBoard and Weights & Biases. Second, we are working on extending our YAML specification to handle higher-level patterns of work natively, so complex but common ML operations (like cross-validation sweeps) will require only a simple configuration to execute across the graph, rather than manually defining every individual task.

Build What Matters at Flock

Flock Engineering is building technology that makes a real impact. We move fast, apply rigor where it matters, and stay focused on solving meaningful problems. If that sounds like you, explore our open career opportunities here.

Protect What Matters Most.

Discover how communities across the country are using Flock to reduce crime and build safer neighborhoods.

.webp)

.png)