Safety Is a Fundamental Right

Public safety technology should reduce harm without creating new risks. Our systems are designed with clear limits, built-in protections, and user accountability.

In plain terms: The system is designed to improve safety, without giving anyone unchecked access.

Public safety technology should only be used for public safety purposes. It must follow local laws and have limits built-in to mitigate misuse.

That means: The rules aren’t optional. They’re built into how it works.

What Flock ALPR Does Not Do

We create products for public safety work, with clear limits designed to protect privacy and mitigate misuse.

ALPR Does Not Use Facial Recognition

Flock ALPR does not identify drivers or passengers. ALPR system searches are based on vehicle details, not faces.

In plain terms: It cannot recognize people.

ALPR Does Not Predict Crime

Flock ALPR does not assign risk scores or guess who might commit a crime. It supports investigations. It does not forecast behavior.

That means: No predictive policing.

ALPR Does Not Analyze Demographics

Flock ALPR does not collect or infer race, religion, gender, ethnicity, or other personal traits.

In plain terms: It does not profile people.

Designed to Reduce Harm

We combine technical limits, supervision tools, and clear rules to reduce misuse.

Use Is Purpose-Bound

ALPR system searches must relate to a specific investigation.

That means: You can’t search “just because.”

Evidence Over Assumptions

ALPR system search results are based only on observed vehicle characteristics.

In plain terms: The system reports what a vehicle looks like, not who someone is.

Decisions Stay Local

Your local agency decides where ALPR cameras are placed, who can access them, and what rules apply.

That means: Your community stays in control.

No Demographic Capture

The ALPR system does not collect or process demographic information.

In plain terms: It focuses on vehicles, not personal traits.

What Flock Does, and Does Not Do

We build trust through transparency. We explain exactly what our system can and cannot do, so communities understand the limits and the built-in protections.

Misuse Is Preventable & Enforceable

Technology alone isn’t enough. So we make use visible. Every search is recorded, tied to a specific user, and supervisors can review activity.

Audit Trails

Every search is recorded and linked to a specific user. Managers can look at how the system is being used and address any breaches of policy.

That means: If someone misuses the system, there’s

a record of it.

Oversight Exists at Every Level

The use of public safety technology should follow local rules, include public input, and align with agency policy.

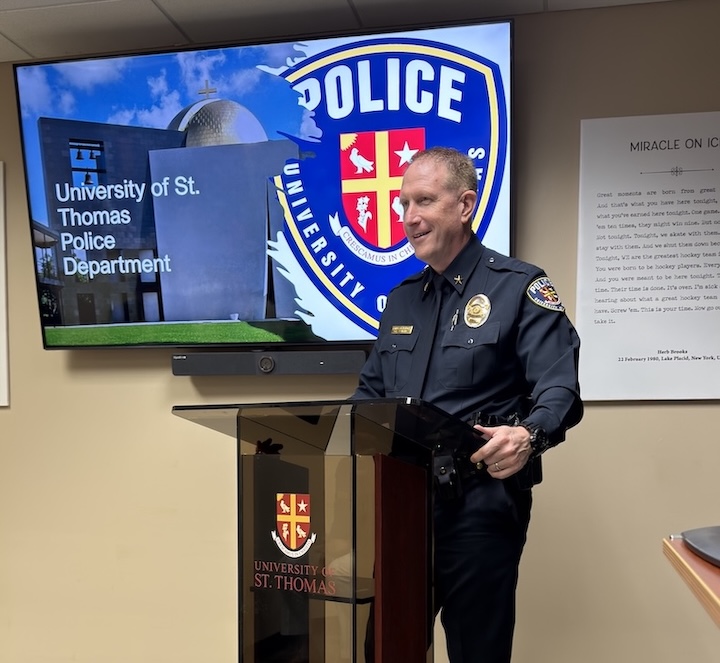

Community & Local Agencies

Local Decision Making

Communities decide whether to use this technology through public processes.

Rights & Safeguards Engagement

We consult privacy and policy experts to improve safeguards.

Safeguards Are Regularly Evaluated

We test safeguards, review what we learn, and tighten limits over time, because community expectations evolve.

Ongoing System Review

We regularly improve controls and protections to keep up with community expectations.

Evidence Based Outcomes

We look at how our tools perform and what impact they have to make sure they are used responsibly and to help us improve.

Refinement Through Findings

What we learn leads to updates in safeguards and documentation.

In plain terms: We don’t set rules once and forget them.

Frequently Asked Questions

No. The ALPR system is used for specific investigations under rules set by your local agency. Only approved users can access it. Every search is recorded. Data deletes automatically after a set time (often 30 days unless local law says otherwise).

In plain terms: Not everyone. Not all the time.

No. ALPR system searches must relate to a specific investigation and follow agency rules. Only authorized users can access the system. Every search is logged.

That means: No unrestricted watching.

No. The ALPR system does not recognize faces or identify people. ALPR system searches are based on vehicle characteristics only.

In plain terms: It cannot identify a person by their face.

No. The ALPR system does not predict who will commit a crime and does not assign risk scores.

That means: It supports investigations. It does not forecast behavior.

Your local agency decides where cameras are placed, who can access the system, and what rules apply. Communities participate through public discussions and with their elected officials.

In plain terms: Control stays local.

We regularly check our controls and safety measures to make sure our rules keep up with new standards and what the community expects. This information helps us update our safety features and rules for managing the system.

That means: We measure, review, and improve. Continuously.

.webp)